May 29, 2026

Karaganov's argument for a nuclear war with NATO seems to be somewhat mis-interpreted in our media, but at least someone was listening. Here's what Mearsheimer said on DDDD:

DAVIS: What do you make of this situation?

MEARSHIMER: What's going on here has very little to do with the battlefield. It's important to emphasize that the Ukrainians with assistance from the West are increasing the number and the sophistication of attacks on the Russian homeland. And furthermore, you have all this warlike rhetoric in the west to go along with these western aided attacks on the Russian homeland. And the Russians have come to the conclusion uh that they have no sufficient deterrent against the west anymore or against Ukraine in terms of attacking the Russian homeland and that something has to be done to rectify this situation. They ... in another words have to reestablish deterrence and the Russians basically have concluded that the only way that this can be done is to attack targets in Europe and in effect you're saying attacking targets in NATO member states.

It's the only way to send a clear message and reestablish deterrence. And as Karaganov argues, and it's very important to emphasize that Karaganov's views are shared by many, including in the upper echelons of the Russian elite, you start with conventional weapons. And if that doesn't work, if attacking a European state with conventional weapons doesn't cause deterrence, doesn't cause the Europeans to back off and doesn't cause the Europeans to tell the Ukrainians to back off, then you use a limited number of nuclear weapons. And you do that not to win the war in any military sense, but you use that to send a powerful signal to the Europeans that you mean business, that you are so serious that you're willing to use nuclear weapons and throw all states out on the slippery slope to oblivion. And you're telling the West that the last chance to avoid going down the slippery slope is for them to cease attacking uh Russia, to cease helping Ukraine to attack Russia. So that's what's going on here.

And the problem is that in the West, most people don't take Russian threats seriously. Uh, and they think, people in the West think, and the Ukrainians think that you can continue to poke the Russians in the eye, and when they complain or say that they're going to take drastic action against you, you can just brush it off. They won't do that. They haven't done it in the past, they won't do it now. So, let's just keep poking them in the eye.

So as you can see, there's more to Karaganov's proposals than "let's nuke NATO". Mind, I'm not saying nuking NATO is going to work out for him, Russia, or the world any more than attempts to bomb Iran worked out for Trump.

One other thing, I absolutely heard people say that Russian's won't do anything. Dara Massicot argued it in an episode of War on The Rocks podcast:

MASSICOT: So, if they (Russians) want to have attitude with us, I highly recommend that we remind them of that fact. And I have lots of other suggestions for how we can remind the Russians of things. Just ask me.

MODERATOR: I'm asking you. You havew a microphone.

MASSICOT: Okay. Well, here's one. So, you know, how did Russians end up in Syria? By the Russians telling the legitimate government of Syria invited them to come in. Well, the Zelensky government has invited us to come in to provide some sort of air defense protection of Western Ukraine. They invited us in for multiple reasons. So, if Russians want to have an attitude about foreign presense in Ukraine, I have two pieces of advice.

Remind them of that fact. And then also, my advice for anyone considering putting boots on the ground, they really turn up the noise on the escalation question against you. Ignore that. But if you're going to put people into Ukraine, do it quickly. Don't give them the opportunity to do anything about it. Just have them there. They're not going to do anything once you're there, but just don't have a long lead. Put them in. My two cents, my soap box.

Many such cases.

They are going to continue thinking that Russians will not do it until Berlin is nuked.

Posted by: Pete Zaitcev at

08:25 PM

| No Comments

| Add Comment

Post contains 736 words, total size 5 kb.

March 01, 2026

This is the seminal long-tweet, where AW formulated the thesis that The Davos Regime does not deal with other power centers as peers, but with rebels and criminals, and what the first corollaries of it are. Without further ado:

I posted the other day that the story of the colossal sanctions failure and backfire against Russia is a case study in the benefits of forced reshoring. Russia is not some rentier petrostate that needs foreign contractors to keep its lights on, it is a developed country with a highly educated population and vast latent productive capacity. With Western-facing trade largely cut off except for some high-demand commodities, and domestic demand for goods and services higher than ever thanks to the war, those jobs in productive industries that were brain-drained out of Russia to the West in the 1990s simply returned home. People fled the service industries for higher-paying factory work, and rising wages have fueled an economic boom.

The thing is that this domestic growth model, although excellent for the ordinary man on the street, is anathematic to oligarchs. It's inefficient - one of the first economic theories they teach you is how free trade creates greater net prosperity because of the efficiencies of specialization. Of course they leave a couple things out in Economics 101: (1) sufficiently large countries can actually be "good at everything" and have no real need for foreign trade as all efficient specialization can occur between internal trade nodes; and (2) the real world is not an economic supply-demand chart, the benefits from this complex arrangement will be engineered to flow primarily to the people managing it. Paying for Chinese goods with American banking-sector money is very efficient but only economically benefits a small group of transnational elites. Ergo the "Davos Regime" - this highly rarified group of people meet up every year for the World Economic Forum in the city of Davos, Switzerland.

So where does the Rules-Based International Order enter into it? Well it's quite simple - you can't have an economic bloc without some level of shared governance to enforce the bloc rules, and these governance structures tend to grow with time. It's why the European Coal and Steel Community* dictates the policy of most ostensibly sovereign European countries now. And with the emergence of a planetary economic bloc after the Cold War - generating astronomical wealth to be creamed off by a new generation of oligarchs sophisticated enough to manage its incredible complexity - a putative world government was going to emerge almost as though by the laws of geopolitical gravity.

* now known as the European Union

The "Rules-Based Order" is thus simply the domestic policy of the Davos Regime - an entity that has no foreign policy because it purports to govern mankind and has arrogated to itself a monopoly of legitimate force worldwide. The Davos Regime conducts no real diplomacy and recognizes no other sovereigns, only rebels against its rule.

This monopoly on violence and diplomatic atrophy, by the way, precisely explains the behavior of Western leaders of late. Brutalizing protesters against their rule at home - or bombing their enemies abroad - is legitimate because they are representatives of a global sovereign with a monopoly on legitimate force. Lesser entities not part of the Davos Regime have no such rights, and any use of force on their part is presumed to be criminal in nature and to be punished severely. Similarly, the institutional collapse of Western diplomacy is easily explained by the fact that these diplomats do not view themselves as dealing with coequal sovereigns but rather with lesser, subject entities to be dictated to, and to whom they lack any real reciprocal obligations.

You can see immediately why Russia would drive the Davos Regime absolutely up the wall.

I posted about the lessons from the forced re-shoring previously, so this topic is congenial for me.

Posted by: Pete Zaitcev at

05:34 PM

| No Comments

| Add Comment

Post contains 655 words, total size 4 kb.

February 21, 2026

Here's a take by Alexander Mercouris of The Duran, from the automated trascript at Youtube:

This was the most nakedly imperialistic speech that an American Secretary of State has given since I think the foundation of the republic in the 1780s. Perhaps there was an exception, which is the Theodore Roosevelt period, before the first world war and all of that, but I mean this is absolute naked imperialism. It was, you know, that the West has been in decline, but that it has now (to) reverse its decline. That the way to do this is basically for everybody to get behind the United States. That the United States is going to be utterly aggressive from this point forward. And that we can forget all about liberalism, globalism, all those nice fancy words. They've served their purpose. They don't really mean anything anymore. For it's raw power and that's all it is. And we're in this great enterprise to reestablish the empire in the strongest possible way. Using every tool at our disposal... You know, violence if we have to, military power, definitely economic coercion if that's what works. And we're going to do it for naked self-interest to preserve the West. We are not really any longer there to act as missionaries, to pretend as the rest of the world or even to ourselves that we are doing it for some greater, higher motive. Now that that is how the speech I have to say read to me.

Obviously he didn't put it quite as savagely as that but that was basically the meaning. And there was this extraordinary passage in which he compared the state of the west today and the state of the west before the second world war, when the West was embarked, you know, was still engaged in vast imperial enterprises, when the world was, you know, covered by European colonial empires and he almost spoke as if the dismantling of those empires because they were symptoms of the decline of Europe and of the West was a bad thing. Completely ignoring the fact, by the way, that it was the United States that was instrumental to a great extent, not entirely or not even principally, but it was instrumental in a great to a great extent in dismantling those empires.

Now, I have to say, not only was this, in my opinion, a savagely imperialist speech, it was also a complete betrayal of the MAGA American first non-interventionist principles that Donald Trump won election on back in 2024. Perhaps by now we should not be surprised about that.

But I have to also say that to me it looked completely utopian, utterly fantastic, as detached from reality as anything we've heard from the classical neocons, even more so perhaps. Maybe the United States could have entertained these fantastic ideas at the peak moment of its power in the 1940s. But today, when it is massively in debt, when it is de-industrializing itself, when it no longer accounts for such a major part of the global economy, I mean to me it seemed to be completely unreal. Anyway, that was my preliminary view of it.

There's more where that came from, such as an explanation why all the elite Euroscum applauded Rubio, and were sad later (see, The Faces Of Kaja Kallas).

Thanks Brickmuppet for the reminder.

Posted by: Pete Zaitcev at

05:54 PM

| No Comments

| Add Comment

Post contains 569 words, total size 3 kb.

December 07, 2025

I'm quoting from the document as it's published:

C. Promoting European GreatnessAmerican officials have become used to thinking about European problems interms of insufficient military spending and economic stagnation. There is truth to this, but Europe’s real problems are even deeper.

Continental Europe has been losing share of global GDP — down from 25 percentin 1990 to 14 percent today — partly owing to national and transnational regulations that undermine creativity and industriousness.

But this economic decline is eclipsed by the real and more stark prospect of civilizational erasure. The larger issues facing Europe include activities of the European Union and other transnational bodies that undermine political liberty and sovereignty, migration policies that are transforming the continent and creating strife, censorship of free speech and suppression of political opposition, cratering birthrates, and loss of national identities and self-confidence.

Should present trends continue, the continent will be unrecognizable in 20 years or less. As such, it is far from obvious whether certain European countries will have economies and militaries strong enough to remain reliable allies. Many of these nations are currently doubling down on their present path. We want Europe to remain European, to regain its civilizational self-confidence, and to abandon its failed focus on regulatory suffocation.

This lack of self-confidence is most evident in Europe’s relationship with Russia. European allies enjoy a significant hard power advantage over Russia by almost every measure, save nuclear weapons. As a result of Russia’s war in Ukraine, European relations with Russia are now deeply attenuated, and many Europeans regard Russia as an existential threat. Managing European relations with Russia will require significant U.S. diplomatic engagement, both to reestablish conditions of strategic stability across the Eurasian landmass, and to mitigate the risk of conflict between Russia and European states.

It is a core interest of the United States to negotiate an expeditious cessation of hostilities in Ukraine, in order to stabilize European economies, prevent unintended escalation or expansion of the war, and reestablish strategic stability with Russia, as well as to enable the post-hostilities reconstruction of Ukraine to enable its survival as a viable state.

...

Our broad policy for Europe should prioritize:

* Enabling Europe to stand on its own feet and operate as a group of aligned sovereign nations, including by taking primary responsibility for its own defense, without being dominated by any adversarial power;

* Ending the perception, and preventing the reality, of NATO as a perpetually expanding alliance;

Lindsey Graham hardest hurt.

Posted by: Pete Zaitcev at

11:44 AM

| No Comments

| Add Comment

Post contains 417 words, total size 3 kb.

March 27, 2025

By about 1989 it became abundantly clear that MISS needed a packet switching network, and it provided me an opportunity to make the biggest mis-step in my career.

MISS already had a store-and-forward network[1]. As a great example of convergent development it was surprisingly similar to IBM RSCS, a backbone of BitNET. When Butenko implemented a gateway to RSCS, the biggest issue was how MISS had 10-character identifiers and RSCS had 8-character ones. My username, ZAITCEV, was one of the lucky ones that could be used as-is. But either way, it cleary was a dead-end.

Still, this gatewaying experience clearly demonstrated that developing a MISS-specific network was a non-starter. We needed to adopt someone else's network. But which one?

Butenko himself got deeply involved into hacking on Apple Mac at the time, and Macs came with AppleTalk. It was a basic network with a rudimentary provision for routing, and it also featured Apple's equivalent of TCP, ADSP. It supported sharing of files and printers, but no virtual terminal. It ran over the serial ports driven bus and Ethernet.

Other competitors included

DECnet was something mocking X.25 and other WAN networks, mostly, although it managed to support Ethernet. It was not particularly well documented, and I didn't have an access to a reference implementation: the university had no VAX.

Novell's product was extremely popular at the time, so it was easy to find. However, it didn't seem well featured. There was no remote terminal that we needed. The documentation was somewhat vague. The main advantage of IPX was that it supported ARCnet, which was the only real LAN that I had available. Ethernet was much too expensive for me.

Microsoft's offerings LAN Manager and NetBIOS were so bad that I rejected them early on.

When I started investigating TCP/IP, I was somewhat overwhelmed by its scope and features. It was very obviously a better idea than the X.25 garbage, but even so it was a large suite and I sensed that I would not be able to implement it in any reasonable time frame. Also, TCP was the backbone of the system. Having just implemented the uucp g-protocol, I was apprehensive of an internet-capable virtual circuit protocol. But naked IP was almost completely useless.

In the end, I selected AppleTalk.

My thought process went along the lines of looking at not needing ADSP at first (for folder sharing), availability of quality documentation, as well as a reference implementation. My first medium was ARCnet, for which I borrowed the RFC-1201 framing, only with a new protocol ID number (later, I tried to reserve that number with a standards body). I also borrowed SLIP to transmit AppleTalk datagrams to ES-1011. Although my AppleTalk island had no real Apple in it, I looked into PC-compatible ISA cards with Zilog 8530 USART. They existed to connect PCs to Macs, so I had a hope for interoperability.

What can I say, AppleTalk seemed like a good idea at the time.

Aside from betting on the wrong horse to begin with, my second biggest mistake was not realizing that the OS-level API was critically important for networking. If I went with TCP/IP, I would know the role of sockets, but I didn't. As a result, MISS never gained any network API and applications talking to the network relied on kludges that worked through an equivalent of ioctl(2).

[1] At about the same time, Makarov-Zemlyanski implemented MISS network in assembler of BESM-6. It permitted users of OS Dispak to exchange Ineternet e-mail globally through ES-1011 and my e-mail gateway.

[2] The name NERPA itself was a pun on Not-ARPA. Although, Nerpa was a kind of a freshwater seal, endemic to lake Baykal.

Next: Memoir 8.

Posted by: Pete Zaitcev at

06:51 AM

| No Comments

| Add Comment

Post contains 682 words, total size 5 kb.

November 01, 2024

Willy OAM (Matt) and History Legends (Alex) posted a video with a surprisignly deep discussion. I am dead serious about it: just about every other video like this is pure propaganda, shallow yapping. Not this one. Its main downside is the length: two hours and a half. I watched it a couple of times in chunks and in background. Naturally, I have a few comments.

The condition of victory: we are too late for recognition of Donbass now. All of the 4 new regions are officially recognized, so now Russians cannot back down until they re-take Kherson.

In addition, Ukrainian terrorism is a problem. If Zelensky or his successor is allowed to sign a piece accord, nothing will change. They will continue to drone the heck out of Russian civilians, assassinate (like Dasha Dugina), send bombs (like Kerch bridge). Just because of this, Russia cannot stop without a regime change anymore.

In February 2024, when Russian soldiers were in suburbs of Kiev, a possibility existed that the Kiev regime would take it seriously. Russian delegation said: knock this shit off, of this is what's going to happen, understand? When the West vetoed it (by executing a top negotiator as a warning to Zelensky), that possibility was closed off.

Corruption: Matt and Alex talk as if the corruption is endemic to Ukraine. But it's not the whole picture. Ukraine was the dedicated corruption statelet for EU for decades. It began when Kuchma was President. The corruption was fostered and promoted by EU and US, top down. From Hunter and Burisma down to the last border guard, the whole society is now fully corrupt. You need decades and a generation change to uproot it. The effort is comparable to what Italy is doing to uproot mafia in the south.

East Ukraine versus West Ukraine: I feel like I'm dragging this high-brow talk down by bringing it up, but there was a great Chad westerner and Virgin easterner meme. It went like this:

Chad western Banderite: Earns money by smuggling and selling stolen goods. Doesn't give a shit about laws, toppled the government on the maidan. Happily speaks his weird dialect with over 9000 Russisms. Dreamed of war against the moskal since the 90s. Ran away from his first battle, is alive. His hometown wasn't bombed. Stole humanitarian aid, bought a new apartment. Shot a child, said it was a saboteur, got away with it.

Virtin eastern Ukrainian: Works 9 to 5 or owns a small business. Followed the law, voted in elections. Learned literary Ukrainian, tortures himeself and his family with it. Didn't want the war, forced to shoug "akhmat sila" with face in the dirt. Fights Russians to the end, his body will never be buried. His hometown was destroyed in fighting. Humanitarian aid didn't come, again. Fired a T-90, hit a civilian home, the judge in Donetsk will setence him for genocide.

And for the coda: Virtin believes in a united Ukrainian people. Chad doesn't consider East Ukrainians to be Ukrainians, or human at all.

Now that I successfully injected shallow lewidness, check out the video. One extremely interesting theme in it was an over-arching one, and the one the duo didn't name explicitly, but discussed in some depth: how comes that Russia is winning, while they obviously have fewer men and fewer resources than Ukraine fully backed by NATO? Yes, the population of Ukraine is smaller, but they can mobilize a far greater ratio of it, because NATO produces everything they need. Russia has the concern of ruining the economy, but Ukraine has no such concern.

Alex in particular pointed out that there was no objective reason why this scenario of crushing NATO victory wasn't realized. It was purely a series of bad decision by Kiev, Zelensky, and NATO. We can speculate that it was largely driven by the debilitationg effects of pervasive corruption. Russia is winning because the enemy is fully corrupt. There is a lesson for America in this.

Posted by: Pete Zaitcev at

07:37 PM

| Comments (6)

| Add Comment

Post contains 668 words, total size 4 kb.

March 05, 2024

The chatter about sending NATO troops to Ukraine was about for months, but just like globohomo seeking a formula for a non-nuclear war with Russia, it took a while to find a suitable approach for the escalation. The latest rumored plan is to send NATO troops to the border of Belarus, freeing up a few more Ukrainians to die at the Russian front.

The question is, what is next?

A key corollary of The War to The Last Ukrainian was running out of Ukrainians eventually. Seems obvious in retrospect, but we are reaching the point a little sooner than expected.

As I wrote before, the globohomo plan was to throw another nation into the war next, and then another, and continue until all Russians and New Europeans are dead. And presumably then Africans are used to re-populate the land, although I'm not sure WEF gave the plan enough thought. We don't need to think about such a far away future either.

Sending Polaks to guard the border with Belarus is obvious next step. Inevitably, a stray missile will happen or other, and there you are: Poland is at war with Russia, while Brussels and D.C. remain on the sidelines, rub their hands, and cackle.

The point of view of RAND and defense of American interests did not prevail.

UPDATE: Did I call it or what (lol). Even a Russian "expert" can see it coming. Here's what I found in Russian language media — an article by one Evgeny Pozdnyakov quoting "Dr." of Military Sciences Konstantin Sivkov:

"This way, we are going to deal with slow and gradual dragging of individual European states into the war with Russia. The eastern countries of the region may become the first victims. In time, a risk exists of expanding the zone of the conflict westward. The core of the Washington's plan is to have states enter the conflict as independent actors, without forcing the aliance [NATO — zaitcev] to intervene".

The difference here is that he thinks that U.S. Government is calling the shots and makes the plan for the global war. But I consider the global transnational elite as a whole to be the motive force, and the U.S. Government merely a component of it, and only insomuch as it's captured by the globohomo. Theoretically specking, it's possible for the U.S. to refuse to participate and stick to its own interests. That is what RAND report called for. But the events showed that the capture of our government was too strong, so people who commissioned and produced RAND report did not get their way.

Posted by: Pete Zaitcev at

03:34 PM

| Comments (11)

| Add Comment

Post contains 434 words, total size 3 kb.

December 06, 2023

In the past couple of years, when someone asked me how long I expected the war to continue, I answered that it's going to be very long, potentially about 20 years. I said that because the ruling globohomo[1] is extremely malignant, vindictive, and above all hungry for power. They cannot tolerate a formation of any alternative power centers, and having put Russia into a position of an adversary, they have no choice but to defeat it. Otherwise, the very foundations of their power will be shaken.

However, these motivations are not rational, and my assessement of them is founded on the general observations of the kind of people we have in power (such a Ted Cruz and Jake Sullivan). In addition, although U.S. is the backbone of globohomo's hard power, the country's interests are not in alignment with globohomo's interests. So, what if someone stopped to think what these interests were?

At the end of 2022, someone at RAND did just that, and produced a report, that takes about an hour or two to read. I am reluctant to quote just the conclusions, because the thinking from the position of American interests is very interesting. But surprisingly enough, the conclusion was that "... avoiding a long war is also a higher priority for the United States than facilitating significantly more Ukrainian territorial control."

A year after this report was written, we can see Ukraine running out of men[2]. My expecation was that once Ukraine is done, the globohomo will throw another nation into The War, most likely Poland. They will continue this until Russia is spent, was my thinking from the basics. They finally found a formula of waging a hot war with Russia without going nuclear, of course they will want to expoit it. But what if someone in American ruling circles were to listen to RAND? What if they can break free from globohomo and act in the American interests? Then the war would be over and I would be wrong.

Either way, read the report if you have the time.

[1] Obviously "globohomo" is an online slur. But it is apt, and the best approximation that the serious thinkers produced was The Global Transnational Elite. I'm going to continue using the slur in the absence of an alternative.

[2] Articles on the topic are dime a dozen today, but the one at WaPo was written before the failed counter-offensive of 2023. What an example of journalism.

Posted by: Pete Zaitcev at

11:15 AM

| No Comments

| Add Comment

Post contains 413 words, total size 3 kb.

November 10, 2023

I have some significant concerns about these worrysome developments. And the adults seem not to care, which is one of the concerns.

I'll have to write on this topic later, but for now, let me capture something that Mark Felton said in a video:

The N.S.D.A.P. was essentially the party of right wing, nationalistic, lower middle class Germans (...). Adolf Hitler was from such a lower middle class background. So also Heinrich Himmler, Joseph Goebbels, and many of the other top leaders. And the lower middle class tastes and values permeated Nazi policy and the new society they sought to create.

Unfortunately, what Nazis offered to the disaffected was (national) socialism. The result is well known.

Posted by: Pete Zaitcev at

07:12 PM

| Comments (2)

| Add Comment

Post contains 120 words, total size 1 kb.

August 15, 2023

A couple of days ago, someone in India noticed that Russian mission started later than its Indian counterpart, but it's going to land first, because Indians chose an extremely long and involved trip path, taking 40 days instead of 10 days. With national prestige on the line, Hindustan Times made the following interesting claim:

To send Luna-25 to the moon, Russia is using Soyuz 2.1, which is a powerful rocket that can give the necessary thrust to the spacecraft to reach the moon’s surface, instead of having to wait in earth’s orbit.

The Indian space agency, which launched its craft on-board the Launch Vehicle Mark-3, earlier known as the GSLV MK3, has a far less fuel capacity and thrust. It also has limitations of the payload capacity.

However, anyone who knows anything about space rockets will immediately recognize that Indian LVM3 rocket is in fact about twice as big and powerful as Russian Soyuz-2. Its launch mass is about 650 t vs 320 t, and the GTO performance is about 4 t vs 2 t for Soyuz.

Leaving aside just how journalists get away with boldly posting such false claims, what went wrong? Why did a far more powerful Indian rocket underperform in this mission? The expert consensus answer is a combination of two factors: poor mission planning and architecture of LVM3.

Indian rocket is indeed very powerful, but calculations (not mine) show that it can only inject 2100 kg to a direct transfer orbit to the Moon. This happens because LVM3 is designed for comsats and uses a large 3rd stage, which ignites relatively early. For a Moon trip it contributes 3 km/s! Because of its size it is heavy, and that handicaps its performance in beyond-Earth missions.

Still, 2100 kg is more than mere 1750 kg that Soyuz-2 managed. However, Indians chose to split the mission into orbital and lander segments. The orbiter does pretty much nothing except providing a backup for communications, but it eats the mass budget. The mass of scientific instruments is about 30 kg on both missions, although 26 kg is taken by the rover in Indian case.

Of course, Indians will be the last to laugh if Luna-25 lands into a crater that precludes direct communication with the control station at Earth.

Posted by: Pete Zaitcev at

08:25 AM

| Comments (1)

| Add Comment

Post contains 382 words, total size 2 kb.

April 18, 2023

The resilience of Russian economy was a bit of a surprise to be sure [1], [2]. A year ago Russians found that no enterprise in the country made nails. Nails! It's not wonder that our experts expected the whole economy to unravel.

But on this topic, I'm concerned about something else: when Russians (re-)started production of previously imported goods, they received a product that is both of a better quality and cheaper than the one before. I know of at least 2 examples that are 100% not a fake news: cat food and bricks.

Leave the war aside for now and ask how this is possible. Why is it that the international trade and markets did not settle onto the best product. How did it stay unbeknownst to consumers, so that their cats only received better food when trade links were broken by force. My inner libertarian cries very much while seeking answers.

Something like this happened in America too. When Trump chose to focus on regulation and protectionism, a legion of experts expressed grave concern that he was about to damage the well-being of Americans by destroying our foreign trade. But the result was an incredible economic growth and the longest period between recessions ever.

Honestly I suspect that thanks to the sanctions, Putin's regime is experiencing a bit of unintentional Trumponomics. The global elites made him a favor by destroying some of the worst of rent-seeking, wealth transferring schemes that were burdening Russian economy. Oft evil shall evil mar and all that.

Keep in mind that sanctions absolutely work in targeted areas. For example, Russian space industry is hurting greatly. Their GPS system is in jeopardy, teetering on the brink of coverage gaps, while the launches of all-domestic satellites keep being postponed. I'm not arguing against sanctions (although I'm against the overuse and abuse of them). But there's certainly more to the story than just some kind of weird failure of the sanction regime to bring Putin to his knees - something that has a lot of import to our own lives in America.

UPDATE 20250328: A discussion in X comments raises questions. If forced re-shoring is good, why is North Korea not the most prosperous country in the world?

Posted by: Pete Zaitcev at

09:03 AM

| No Comments

| Add Comment

Post contains 380 words, total size 3 kb.

February 08, 2023

X: > she :FeelsCringeMan:

J: because he fled the country

J: that's why he was in ireland

S: oh, that makes sense

N: what did szhxir do?

S: made homemade tranny hormone meds in a bathtub and shipped it to children via USPS or something

N: ...

S: and bragged about this fact on social media

N: I like how these people think they have absolute immunity from every possible consequence

S: then proceeded to get KiwiFarms nuked, IIRC

S: J probably knows the actual details

S: ya, you'd think giving children any sort of medicine without their parent's knowledge would be enough

S: but lul tranny privilege

J: are you asking about this SPECIFICALLY

J: or

J: this entire's troon history

J: which is

J: INSANE

J: and AFFECTED THE ENTIRE INTERNET

J: LIKE

J: THE INFRASTRUCTURE

J: OF THE INTERNET

J: IS FOREVER CHANGED

J: BEACUSE OF THIS TROON

N: might as well link the whole story

J: There is no full story

J: the media literally printed this fags story without question

J: I don't think anyone ever summarized it

J: but basically

J: him and another troon literally run a fucking grooming discord

J: and send bath hormone pills they made by cooking plastic bags to children

J: and kiwifarms documented the entire thing

J: beacuse they had people inside the discord

J: now keep in mind that kiwifarms is troon central too

J: so anyways

J: that fag has to flee america

J: and goes to ireland

J: because of this

J: and then he contacts the media and claims he had to flee america because kiwifarm people attacked him when he was putting groceries in his car

J: so

J: this troon starts a campaign to take down kiwifarms

J: that makes mainstream media

J: around the world

J: and reddit joins the cause

J: and they harass cloudflare

J: at first cloudflare says they don't take down content unless it's illegal

J: but after a full month of them getting harassed

J: they relent

J: so kiwifarms hops from DOS to DOS service

J: but the other services

J: couldn't stop the GIANT FUCKING DOS that was hitting kiwifarms

J: which was LITERALLY AFFECTING TIER 1 ISPs

J: because troons were buying chink botnets like candy

J: so Kiwifarms got kicked even from a russian service because it was affecting their business because only cloudflare can stop such huge attacks

J: so then

J: kiwifarms is literally like

J: "FUCK IT WE'LL MAKE OUR OWN DATACENTER WITH DOS PROTECTION WITH BLACKJACK AND HOOKERS"

J: and they ACTUALLY SUCCEED

J: SO THEN

J: THE MEDIA AND TROONS

J: ASK TIER 1 NETWORK PROVIDORS

J: TO FUCK WITH KIWIFARMS ROUTING

J: AND AN AMERICAN ONE DID

J: WHICH IS

J: UNPRECEDENT

J: AND FUCKED UP

J: BECAUSE THEY DON'T EVEN DO THAT FOR PEDO SHIT

J: TIER 1 PROVIDORS NORMALLY NEVER INTERVENE

J: THEY'RE ALWAYS NEUTRAL NO MATTER WHAT

J: So this entire movement to take down kiwifarms finally dies down because reddit gets bored of it

Posted by: Pete Zaitcev at

09:27 AM

| No Comments

| Add Comment

Post contains 536 words, total size 3 kb.

February 07, 2023

Beretta 80X, announced at this year's SHOT Show, apparently allows cocked-and-locked carry mechanically. However, the manual says not to do it:

IF YOU ARE NOT READY TO FIRE

Engage the thumb safety by rotating the safety lever with a fully upward thumb pressure, so as to cover the red warning dot (the red dot is visible when the thumb safety is disengaged), causing the hammer to decock and rest on the hammer stop.

And in red font:

WARNING: Always ensure that the safety is fully engaged until ready to fire. A safety is fully engaged only when the safety can move no further into the safe position. A safety which is not fully engaged will not prevent weapon discharge.

The disparity between the reality and the manual created a certain confusion and controversy.

Here's what a former R&D engineer, now a program manager at Beretta, wrote about it at PF:

As a TDA shooter and a Beretta employee talking about an Italian product (aka the European team wrote the manual with their legal counsel, and they are very safety-minded), I must insist on the decocked position for carry.

That said, technically speaking, the safety has been redesigned from the old gun, and that middle position is an intentional step with an active trigger disconnect, and this gun does have a firing pin block... Do with that information what you will...

Now, I'd really truly strongly recommend each shooter evaluate the safety detent strength and holster coverage before making any CCW decisions, and note our DA pull on that gun is easily in the 5.5# range. That extra length/trigger feedback in the first pull as a 'are you sure you want to put a hole in this?' is a major benefit of the TDA systems for bump in the night, while the modern lightweight pull isn't hurting your accuracy or slowing you down if you're positive that trigger needs to get pulled.

Personally, when I get mine, I'll be carrying mine in the same condition as I carry my PX4 Compact Carry.

Naturally, he had to recant soon thereafter. Users soon found that it was possible to make the gun fire in the "false safety" condition by pulling the trigger several times, or pulling it sideways forcefully.

Posted by: Pete Zaitcev at

06:20 PM

| No Comments

| Add Comment

Post contains 381 words, total size 3 kb.

November 29, 2022

Quoting from May 25, 1999:

"Question (Norwegian News Agency): I am sorry Jamie but if you say that the [Serbian] Army has a lot of back-up generators, why are you depriving 70% of the country of not only electricity, but also water supply, if he has so much back-up electricity that he can use because you say you are only targeting military targets?

"Jamie Shea: Yes, I'm afraid electricity also drives command and control systems. If President Milosevic really wants all of his population to have water and electricity all he has to do is accept NATO's five conditions and we will stop this campaign. But as long as he doesn't do so we will continue to attack those targets which provide the electricity for his armed forces. If that has civilian consequences, it's for him to deal with but that water, that electricity is turned back on for the people of Serbia."

Heh.

Posted by: Pete Zaitcev at

08:58 AM

| Comments (9)

| Add Comment

Post contains 155 words, total size 1 kb.

August 08, 2022

Received a message from a friend yesterday. He's a CP, IR, CFI: the usual for a serious hobby pilot with IT background (he's a fairly normal sysadmin - Cisco etc.)[2]. But on the side, he flew a Caravan for Martinaire for 4 months or so, and a King Air for a jump outfit a bit on weekends. And now, he accepted a job at an airline. Part 121, not a quasi-airline like Boutique.

He's going to spend a week typing in ATR 76, then a month or so in Florida getting ATP. As soon as he passes the checkride, he's getting into the right seat. The airline pays for everything.

All these years, the media kept making stories about "pilot shortage". And it always was bollocks. So I tuned it out this time around too, but things look different now.

I mentioned this to my wife. She said that she saw an interview with an airline CEO (I don't watch TV, but she does). The exec said something to the effect that, for decades, they sent people to furlough, then recalled them, and pilots always trundled obediently back. But not this time. They aren't returning, thus making the 2022 pilot shortage real.

If she told me before I heard from my friend, I'd think the exec was lying as usual.

Maybe some people don't want to ruin their health by getting jabbed with a dangerous non-vaccine, and the pay no longer makes ruining one's health worth it, even at majors. I dunno, that could be a factor. But it cannot explain the entire amount of pilots not returning from the 2020 furloughs.

[2] For comparison, I have PP with 700 hours, no IR.

Posted by: Pete Zaitcev at

07:29 AM

| Comments (8)

| Add Comment

Post contains 286 words, total size 2 kb.

March 10, 2022

As published at the official website of Austalian Foreign Ministry:

Russia’s invasion of Ukraine has been accompanied by a widespread disinformation campaign, both within Russia and internationally. Tragically for Russia, President Putin has shut down independent voices and locked everyday Russians into a world characterised by lies and disinformation.

The addition of sanctions on those responsible for this insidious tactic recognises the powerful impact that disinformation and propaganda can have in conflict.

The Australian Government is sanctioning 10 people of strategic interest to Russia for their role in encouraging hostility towards Ukraine and promoting pro-Kremlin propaganda to legitimise Russia’s invasion.

This includes driving and disseminating false narratives about the "de-Nazification†of Ukraine, making erroneous allegations of genocide against ethnic Russians in eastern Ukraine, and promoting the recognition of the so-called Donetsk People’s Republic and Luhansk People’s Republic as independent.

The Australian Government continues to work with digital platforms such as Facebook, Twitter and Google to take action to suspend the dissemination of content generated by Russian state media within Australia. [...]

So there you go. It's only 10 people now, but you never know where this is going to end, and at what moment saying anything not approved will become punishable in Australia.

Some are already taking sides. Pixy was trawling Instapundit comments, shitposting for Ukraine and globohomo. That is a serious problem for certain Meenuvia residents.

Posted by: Pete Zaitcev at

07:42 AM

| Comments (2)

| Add Comment

Post contains 227 words, total size 2 kb.

November 19, 2021

The hickory continues growing and reached about 4 ft in the 2021 season. Unfortunately, the leaves look a little diseased. Apparently it's about normal in Texas, which is unfortunate.

Posted by: Pete Zaitcev at

11:20 AM

| No Comments

| Add Comment

Post contains 35 words, total size 1 kb.

August 02, 2021

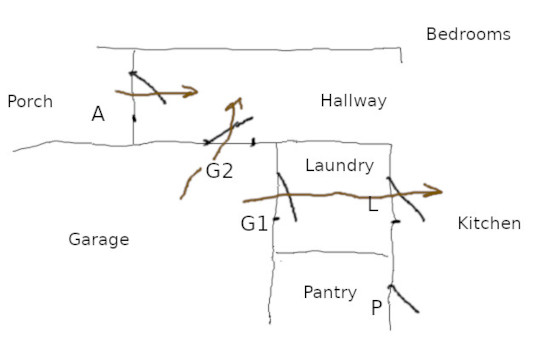

I lived in three free-standing houses with attached garages in the U.S., and all three had the same design feature: the door from garage opened into laundry, which then connected to the rest of the house, typically into kitchen area.

But why? Isn't garage supposed to be dirty while laundry is supposed to be clean?

There must be a very good reason why Americans build their houses that way. But although I am curious about that reason, at this time it's no longer important, because I moved the door from position G1 to position G2 in the picture.

This allows to disrobe in the mud room upon arrival by automobile. Also, it permits causal access to garage without intruding into the laundry area. It's way more convenient, no matter how you look at it.

Posted by: Pete Zaitcev at

07:29 AM

| No Comments

| Add Comment

Post contains 138 words, total size 1 kb.

July 22, 2021

The question of flying a light, personal airplane in a helmet comes up once in a while. As it happens, I have some experience, which I'm going to outline as bullet points for future reference.

Pro:

- You do not need sunglasses and your headset cups always contact your skull properly. You do not need to buy super expensive flying sunglasses with thin temples.

- Your chance of head injury is decreased in certain types of crashes (funnily enough I tested that by experience when I flipped the Carlson and smacked the runway with my head). This is particularly welcome by solo backcountry guys.

- You can fly airplanes with no interior padding in turbulence, like Cessna 162, where I bloodied my head quite well before the helmet times.

- You know who of your fellow pilots are dumb and not to be trusted when they try to make pitiful, inept jokes regarding the matter about which they know nothing.

- You never need to lift your glasses in order to look at backlit LCD displays in the panel. Just glance under the visor. So comfy. This only came about as iPads and the like proliferated. Obviously you can see the steam gauges through eyeglasses.

Cons:

- Not all headphones are compatible with helmets, so you choice of modern ANR headsets is limited.

- Either you carry an additional piece of luggage, or your flight bag is enormous with a separate compartment. This was always a sticking point for me more than anything, especially on ferry flights where you travel commercial with that helmet. Remember that you have to protect the visor and you don't want to check it in.

- If you're tall, you run a risk of scratching canopies in many low wing GA airplanes.

As far as the choice of the helmet, Gentec is designed to save your head when you punch out of F-22. A Gallet helment is more appropriate, I think. But personally, I fly in David Clark K-10, because it's cheap.

Posted by: Pete Zaitcev at

11:01 AM

| No Comments

| Add Comment

Post contains 334 words, total size 2 kb.

July 14, 2021

Over the years of my career, I noticed one funny coincidence. If a company reject me, they are going to fall on hard times. If a company extends me an offer of employment, they are going to prosper.

On the success side:

- MCST (although struggling, it still exists)

- Red Hat (purchased by IBM after a long run of success)

- 3PAR (the only of its generation of storage start-ups, alongside Zambeel, etc., who reached the product phase and exited to acquisition; still exists!)

- Metabyte, of course.

On the failure side:

- Sun Microsystems (4 rejections, too: I pecked at them until they died)

- Transmeta (3 rejections)

- Zambeel (forgot about them until today)

- NUVIA (they actually exited into acquisition, but an ignominious one)

- And finally, Igneous (they were tough; took the curse 4 years to kill them)

Note that my score is not perfect: OVH is still around. Although perhaps it takes a little time, I interviewed for them only a couple of years ago.

Also note that the rejecting companies do not fail because my great capabilities were not employed. As long as they decide to hire me, it is all good, even if I decline. It's the curse of rejection.

Posted by: Pete Zaitcev at

11:01 AM

| Comments (1)

| Add Comment

Post contains 205 words, total size 2 kb.

31 queries taking 0.0421 seconds, 70 records returned.

Powered by Minx 1.1.6c-pink.